Artificial intelligence in health and safety (HSE) is gaining momentum, but most organisations are struggling to turn interest into impact. Many have explored AI, seen demonstrations, or tested tools, yet little has changed in how safety is managed day to day. The challenge is not awareness, it is implementation.

This practical guide explains how to implement AI in health and safety in a way that delivers real operational value. It outlines what AI in HSE looks like in practice, from improving access to safety data through to enabling predictive safety, helping organisations move from reactive reporting to proactive risk management.

Why AI in Health and Safety Often Fails

Many AI initiatives in Health, Safety and Environment (HSE) fail to deliver value.

The issue is not the technology, it is the foundation. Organisations often jump to automation and predictive analytics while their data remains fragmented, incomplete, and inconsistent, spread across disconnected systems and difficult to access or trust.

In this environment, AI amplifies inefficiency rather than improving it.

Effective AI in HSE requires progression. Data must be accurate, sufficiently complete, centralised, standardised, and accessible before it can be turned into usable insights and, ultimately, meaningful action.

If your data is fragmented or difficult to trust, AI will struggle to deliver results. Understanding the quality and structure of your HSE data is the first step to successful implementation.

A Step-by-Step Approach to Implementing AI in HSE

AI in health and safety should not be treated as a one-off project, but as a staged rollout of capability.

At FENNEX, we approach implementation using a simple principle: crawl, walk, run. This reflects how AI is adopted in practice, starting with making data accessible, then turning it into usable insight, and ultimately enabling action.

Each stage builds on the last, moving from visibility, to insight, to action, and increasing operational maturity at every step.

Step 1: Establish a Usable Data Foundation

Make your data usable and reliable

Before introducing AI, organisations must ensure their data is in a usable state.

In practice, this means moving away from fragmented spreadsheets and disconnected systems, and towards a centralised, structured environment where safety, assurance, and risk data is consistent, complete, and accessible.

This step is often overlooked, but it is critical. AI relies on high-quality, standardised data. If the underlying data is inconsistent or incomplete, the outputs will be too.

At this stage, the focus is not on AI itself, but on creating the conditions for it to deliver value.

Step 2: Introduce Conversational AI (Crawl)

Unlock access to your data

Once a solid data foundation is in place, the first meaningful application of AI is improving access to that data.

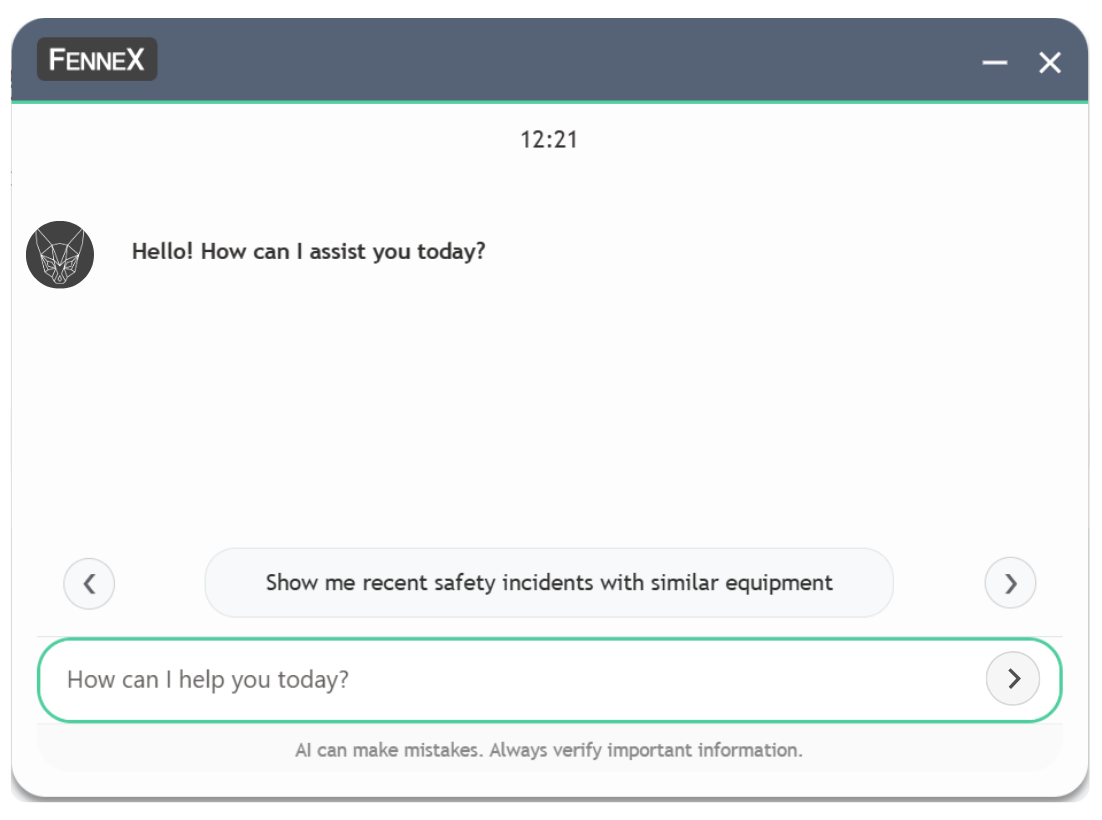

Conversational AI acts as an interface layer, allowing users to interact directly with HSE data rather than navigating multiple systems. Similar to tools like ChatGPT, users can ask questions in natural language, but instead of searching the open internet, the system draws from your internal data sources and selected industry datasets.

This is what sets it apart from traditional search. Rather than relying on keywords or predefined filters, conversational AI understands context, retrieves relevant information across systems, and presents it in a usable format.

In practice, a safety manager can request a summary of recent incidents, identify overdue audits, or review risk trends across an asset in seconds. The system retrieves and consolidates this information from multiple sources.

This removes one of the biggest inefficiencies in HSE, time spent finding and compiling information, while creating a unified view across previously disconnected processes.

It also begins to surface gaps in data quality and structure, which can be addressed before moving to more advanced capabilities.

Step 3: Apply Generative AI to Core Workflows (Walk)

Turn data into usable outputs

With data accessible, the next step is reducing the effort required to turn that data into usable outputs.

Generative AI enables systems to create structured content automatically based on operational inputs. One of the most impactful use cases in HSE is within assurance.

Policies, standards, and guidelines are typically static documents that require significant manual effort to translate into audits or checklists. This process can take days and is often inconsistent.

Generative AI removes that bottleneck. Within platforms like FennexSafe™, policies and procedures can be converted into structured assurance checklists or audit tasks in minutes. Requirements are extracted, organised, and standardised automatically, ready to be deployed across assets or projects.

Crucially, this does not remove human oversight. AI-generated outputs should be reviewed, edited, and approved before use. Built-in verification steps ensure teams remain in control, using AI to accelerate and support decision-making, not replace it.

This significantly reduces administrative burden while improving consistency and coverage. HSE teams spend less time interpreting documents and more time verifying compliance and addressing gaps.

However, while this stage improves efficiency and consistency, it does not yet change how risk is managed at a systemic level.

Step 4: Embed Agentic AI into Operations (Run)

Make your data actionable

The final stage is where AI becomes operationally embedded.

Agentic AI continuously monitors data across safety, assurance, and compliance processes, identifying patterns and deviations that indicate emerging risk.

This is where predictive safety begins to take shape.

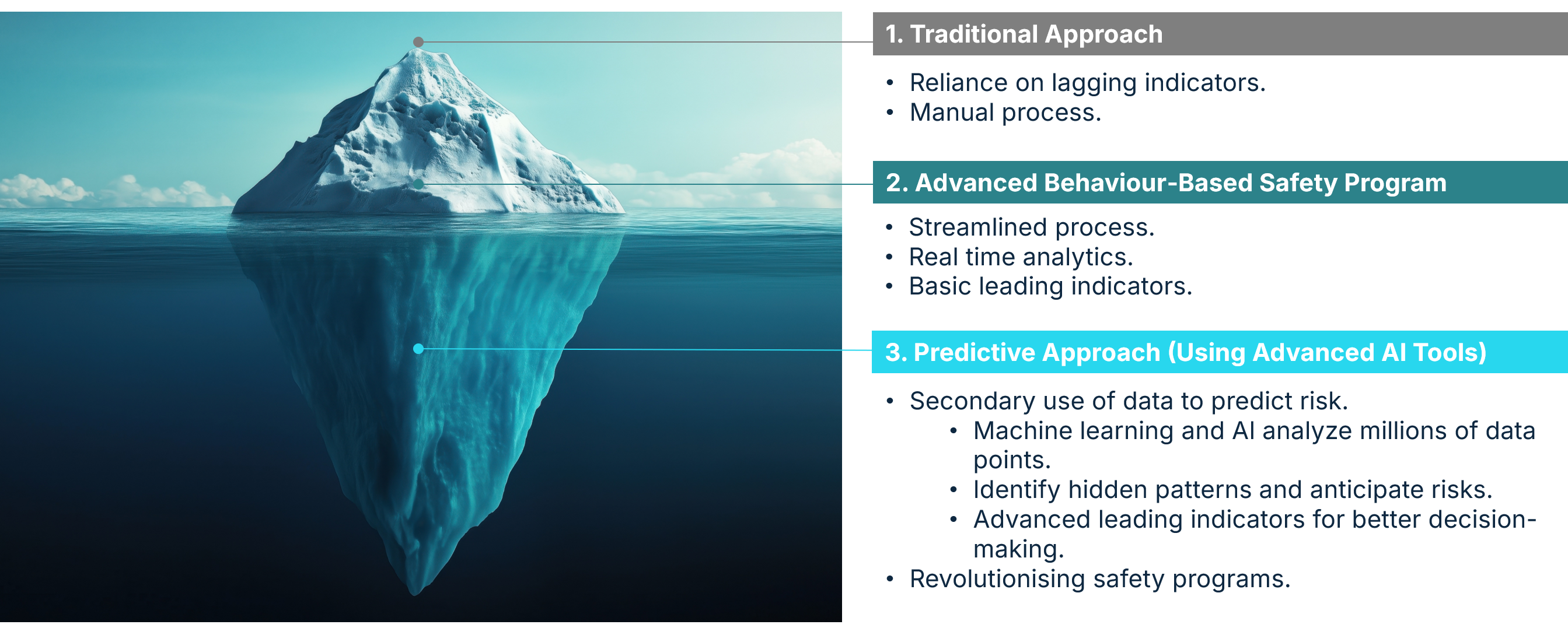

Instead of relying on lagging indicators, AI analyses leading indicators across datasets. A rise in minor observations, combined with overdue corrective actions and reduced audit activity, may signal deteriorating conditions in a specific area.

These signals are not isolated, they are connected.

At this stage, AI moves beyond retrieval and generation into pattern recognition. By analysing large volumes of structured HSE data, it can test hypotheses and identify leading indicators that consistently precede incidents. This is where predictive models are formed, turning fragmented data into meaningful risk signals that would be impossible to detect manually.

What differentiates this stage is the response.

The system can initiate targeted actions automatically. Inspections can be triggered, corrective actions assigned, and issues escalated based on severity and context. Individuals can be prompted when intervention is required.

In platforms like FennexSafe™, this intelligence is embedded directly into modules such as assurance, risk management, and corrective actions, ensuring that insights are consistently translated into action across operations.

This transforms HSE systems from passive tools into active systems of control.

AI is most effective when it works alongside people, not instead of them. In HSE, control cannot be outsourced. What AI does is reduce noise, highlight what matters, and ensure nothing is missed, while teams remain firmly in control of decisions and actions.

Step 5: Build Trust and Shift How Safety is Managed

Shift from reporting to practive risk management

At this stage, the challenge is no longer technical, it is organisational.

For AI to deliver value in HSE, it must be trusted and consistently used. That means teams need to understand how outputs are generated, where human judgement is required, and how AI supports, rather than replaces, decision-making.

Trust is built through transparency and control. When users can review, validate, and act on AI-driven insights, confidence grows. Over time, this shifts how teams engage with safety data, from manually compiling reports to actively monitoring and managing risk.

This is where behaviour begins to change.

Safety teams move from reacting to incidents to focusing on early signals and preventative action. Time is no longer spent chasing data, but on addressing what the data is showing. Decision-making becomes faster, more consistent, and better informed.

The result is not just improved efficiency, but a fundamental refocus of HSE. From reporting and compliance, to continuous risk management and proactive intervention.

What This Looks Like in Practice

In a traditional HSE environment, identifying risk often relies on reviewing reports, audits, and incidents after they occur. Information is spread across systems, and connecting the dots takes time.

With AI embedded, that process changes.

A safety manager no longer needs to compile data manually. Instead, they are presented with emerging risk signals based on patterns across observations, audits, and corrective actions. Areas of concern are flagged early, with recommended actions already in place.

This shifts the role of HSE teams. Less time is spent gathering information, and more time is spent acting on it. Oversight becomes continuous, and intervention happens earlier.

How to Get Started with AI in HSE

Start with your data, not the technology.

Focus on centralising and structuring safety, assurance, and risk data so it is consistent and accessible. From there, introduce conversational AI to improve visibility, followed by generative AI to streamline high-effort workflows.

Only once these foundations are in place should you move towards agentic AI and predictive safety.

This ensures each step delivers value while building towards a more advanced and proactive model.

Conclusion

Artificial intelligence in health and safety is not a single implementation, it is a shift in how risk is understood and managed.

Organisations that follow a structured approach can move beyond fragmented systems and reactive reporting, towards continuous visibility and proactive control.

The real value of AI is not faster reporting. It is the ability to identify risk earlier, act sooner, and prevent incidents before they occur.